Oracle SQLcl version 26.1 came out last week.

- Download

- Release Notes

From the release notes, we have this nugget –

The schema information tool now supports filtering and the ability to retrieve details for other schemas

So what does this mean?

I’ve used my handy agent to summarize this tool for me, but the TL;DR is simple You can now ask our MCP Server to deliver information about ANY schema in the database (which you can access, vs just the connected schema), and You can also request to filter the metadata you want, and the level of metadata you want.

my question  : Give me an overview of our schema information tool – I want to use it to get specific data from my Oracle database to help with future NL2SQL tasks here.

: Give me an overview of our schema information tool – I want to use it to get specific data from my Oracle database to help with future NL2SQL tasks here.

Agent output

Here are the practical details schema-information tools and how to use them effectively for NL2SQL preparation:

sqlcl:schema-information – Summary

This tool performs Oracle schema introspection and returns structured metadata — essentially giving LLM a map of your database so it can write accurate SQL.

Parameter

| Parameter | Default | Notes |

|---|---|---|

schema |

Current connected schematic | Define any schemas you have access to, for example HR, SH, OE |

level |

BRIEF |

BRIEF = name + just type; DETAILED = complete column/constraint/index info |

objects_list |

All objects | Comma separated list to narrow down to specific tables/views/etc. |

filter |

% |

SQL LIKE patterns in object names, for example EMPLOYEE% |

executionType |

SYNCHRONOUS |

Use ASYNCHRONOUS for large schemes that require >2 seconds |

model |

UNKNOWN-LLM |

Provide a model name for logging/telemetry purposes |

Two Key Modes for NL2SQL

Quick inventory – Use BRIEF to see what objects are in the schematic before deciding what to draw in detail:

schema: HR, level: BRIEFDeep context for NL2SQL – Use DETAILED with the target objects_list to get the column names, data types, constraints, and relationships for the table that LLM will use to write SQL:

schema: HR, level: DETAILED, objects_list: EMPLOYEES,DEPARTMENTS,JOBSPractical Strategies for NL2SQL

Rather than dumping your entire schema into a (expensive and noisy) prompt, a good pattern is:

- Run

BRIEFon the whole scheme to inventory everything - Identify the relevant tables for your NL2SQL domain

- Run

DETAILEDonly on that table - Paste the output as a schema context block in your NL2SQL prompt

Trying it for real, my command:

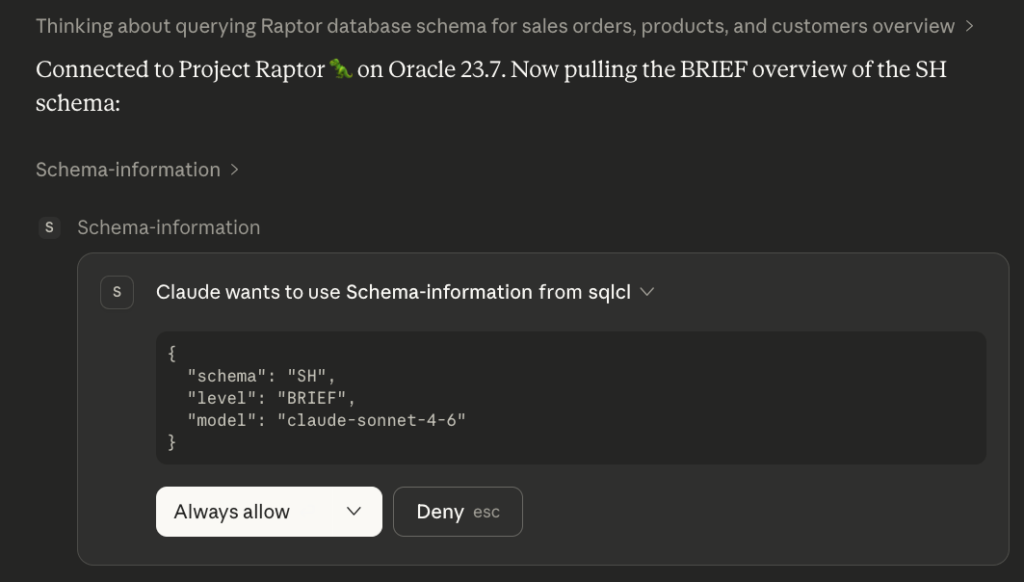

my question  : connect to my Raptor database, use the information tool to get a simple overview of the SH schema.

: connect to my Raptor database, use the information tool to get a simple overview of the SH schema.

I want an overview of sales orders, their products, and the customers involved. Use the mcp tool to get what you need for any NL2SQL query in this chat vs running the dictionary query yourself.

And the agent proceeded to carry out his plan, including the scheme information tool.

And the Agent’s response

Here’s an overview of the SH schema, focused on your sales/product/customer domain:

SH Schema – NL2SQL Context

Core Table (your focus area)

SALES — fact table, partitioned (15 partitions) PROD_ID, CUST_ID, TIME_ID, CHANNEL_ID, PROMO_ID, QUANTITY_SOLD, AMOUNT_SOLD → Join to each dimension table. No replacement PK — grain is a combination of all FK columns.

CUSTOMERS — rich dimensions CUST_ID, CUST_FIRST_NAME, CUST_LAST_NAME, CUST_GENDER, CUST_YEAR_OF_BIRTH, CUST_MARITAL_STATUS, CUST_CITY, CUST_STATE_PROVINCE, COUNTRY_ID, CUST_INCOME_LEVEL, CUST_CREDIT_LIMIT, CUST_EMAIL → FK to COUNTRIES

PRODUCTS — hierarchical product dimensions PROD_ID, PROD_NAME, PROD_DESC, PROD_SUBCATEGORY, PROD_CATEGORY, PROD_LIST_PRICE, PROD_MIN_PRICE, PROD_STATUS, SUPPLIER_ID → Has an SCD column (PROD_EFF_FROM, PROD_EFF_TO, PROD_VALID)

TIMES — a very rich time dimension TIME_ID (DATE), DAY_NAME, CALENDAR_MONTH_DESC, CALENDAR_QUARTER_DESC, CALENDAR_YEAR, FISCAL_YEAR, FISCAL_QUARTER_DESC → Calendar and fiscal hierarchy

CHANNELS — small quest CHANNEL_ID, CHANNEL_DESC, CHANNEL_CLASS

COUNTRIES — geographic rollup COUNTRY_ID, COUNTRY_NAME, COUNTRY_SUBREGION, COUNTRY_REGION

Key Relationships for NL2SQL

SALES ──┬── CUSTOMERS (CUST_ID) → who bought

├── PRODUCTS (PROD_ID) → what they bought

├── TIMES (TIME_ID) → when they bought

├── CHANNELS (CHANNEL_ID) → how they bought

└── PROMOTIONS (PROMO_ID) → any promo involved

CUSTOMERS ── COUNTRIES (COUNTRY_ID) → where they are fromAlso Available (supporting context)

COSTS— partitioned cost fact table (unit cost, unit price by product/time/channel)PROMOTIONS— promo metadata with date range and category hierarchySUPPLEMENTARY_DEMOGRAPHICS— expanded customer attributes (education, employment, household size, sports interests)PROFITS(SEE) — a ready-to-use display that likely combines sales and costsCAL_MONTH_SALES_MV/FWEEK_PSCAT_SALES_MV— materialized views for fast aggregation

Ready for NL2SQL queries! If you want, I can withdraw it DETAILED metadata on one of these tables (esp SALES, CUSTOMERSor PRODUCTS) before we start — this will provide the right data types, constraints, and indexes for the LLM context.

What just happened

- I use our MCP Server to work with my Oracle AI Database…

- with a connection tied to the database user, HR

- to get information about a scheme, SH

- without running ANY data dictionary queries

Follow-up question –

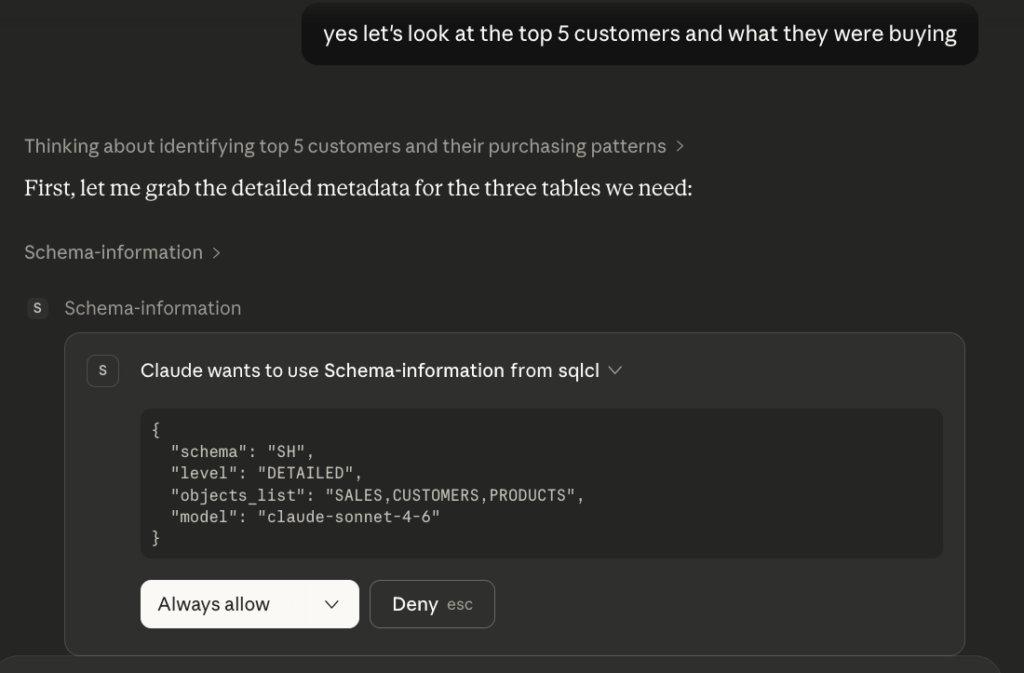

my question  : find the top 5 customers and what they buy

: find the top 5 customers and what they buy

My agent wants more information, so it calls the information tool again, but this time with more detail and only for 3 specific objects – in this case, tables.

Once the metadata is returned, the Agent can continue with the NL2SQL part of the job, and execute the SQL.

Again, then the agent’s response

Think about identifying the top 5 customers and their purchasing patterns

First, let me grab the detailed metadata for the three tables we need:

SQL queries designed for customer spend analysis

The schema context is confirmed. Now running a query — top 5 customers by total spend, by most purchased product categories:

run-sql

WITH top_customers AS (

SELECT

c.CUST_ID,

c.CUST_FIRST_NAME || ' ' || c.CUST_LAST_NAME AS customer_name,

c.CUST_CITY,

c.CUST_STATE_PROVINCE,

SUM(s.AMOUNT_SOLD) AS total_spent,

SUM(s.QUANTITY_SOLD) AS total_items,

COUNT(*) AS total_transactions

FROM SH.SALES s

JOIN SH.CUSTOMERS c ON s.CUST_ID = c.CUST_ID

GROUP BY c.CUST_ID, c.CUST_FIRST_NAME, c.CUST_LAST_NAME, c.CUST_CITY, c.CUST_STATE_PROVINCE

ORDER BY total_spent DESC

FETCH FIRST 5 ROWS ONLY

),

top_category AS (

SELECT

s.CUST_ID,

p.PROD_CATEGORY,

SUM(s.AMOUNT_SOLD) AS cat_spent,

RANK() OVER (PARTITION BY s.CUST_ID ORDER BY SUM(s.AMOUNT_SOLD) DESC) AS rn

FROM SH.SALES s

JOIN SH.PRODUCTS p ON s.PROD_ID = p.PROD_ID

WHERE s.CUST_ID IN (SELECT CUST_ID FROM top_customers)

GROUP BY s.CUST_ID, p.PROD_CATEGORY

)

SELECT

tc.customer_name,

tc.cust_city || ', ' || tc.cust_state_province AS location,

TO_CHAR(tc.total_spent, '$999,999.99') AS total_spent,

tc.total_transactions,

tc.total_items,

cat.PROD_CATEGORY AS top_category

FROM top_customers tc

JOIN top_category cat ON tc.CUST_ID = cat.CUST_ID AND cat.rn = 1

ORDER BY tc.total_spent DESCHere are your top 5 customers based on lifetime spend:

| Customer | Location | Amount Spent | Transaction | Top Categories |

|---|---|---|---|---|

| Dora Rice | Yokohama, Kanagawa | $103,412.66 | 248 | Golf |

| Loli Katz | Oran, MO | $99,578.09 | 256 | Golf |

| Eppling’s Thesis | Solingen, North Rhine-Westphalia | $98,585.96 | 266 | Golf |

| Xerxes Abbassi | Wakefield, England | $98,006.16 | 235 | Golf |

| Rose Lehman | Yokohama, Kanagawa | $97,573.55 | 240 | Golf |

Several things emerged:

- Golf dominates — the number one category for every top spender is Golf, which is worth exploring. That may be the expensive product category in this data set.

- Amount sold = number of transactions for everything, meaning each transaction is one unit — no bulk purchases at the top level.

- Spread internationally — Japan, Germany, the UK and the US are all represented. SH scheme’s global customer base is starting to emerge.

- The spend range was fairly tight ($97K–$103K), indicating that they were consistently high-frequency shoppers versus large one-time shoppers.

But Jeff, I have a BIG, ugly dictionary. It will take a while to get this schema information report back!

The MCP Tool request will time out after 60 seconds. So, it’s important to support long-running requests, asynchronously.

Just ask your agent to plan long-term tasks, it will know to switch to asynchronous task mode. It will then request task updates until the results are ready.

The post Deep AI Enrichment for Oracle Database via MCP first appeared on ThatJeffSmith.

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.