Anthropic’s own data puts code output per engineer at 200% growth following internal Code Claude implementation. Review throughput doesn’t match that. The PR fell by the wayside, and subtle logic errors, removed authentication guards, field renames that broke the query three files away, all slipped through.

Claude Code Review’s answer is a multi-agent pipeline that sends dedicated agents in parallel, runs verification on each finding, and posts inline comments on different lines that find issues. Anthropic charges an average of $15-25 per review, above Team or Enterprise package seats.

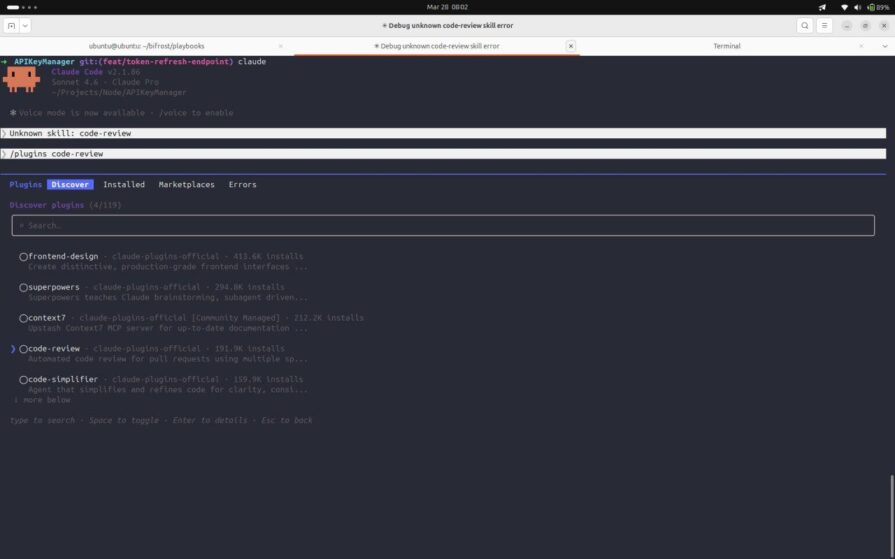

This section puts the tool through real PR on the tRPC TypeScript codebase, showing the full output with confidence scores, showing what passes the 80-point threshold and what is filtered, and provides clear cost estimates. If GitHub and the local plugin don’t agree, you’ll see both.

🚀 Sign up for The Replay newsletter

Replay is a weekly newsletter for developers and engineering leaders.

Delivered once a week, this is your curated guide to the most important conversations around frontend development, emerging AI tools, and the state of modern software.

How a five-agent pipeline works

When the review begins, the pipeline moves through four phases sequentially. This starts with the Haiku agent checking to see if the PR is eligible and scanning the repo looking for it CLAUDE.md files. Next, two agents run side by side, one summarizing the PR changes, the other summarizing the full differences. Then five special agents run in parallel on the differences. Finally, everything they tag has to go through verification before anything is posted.

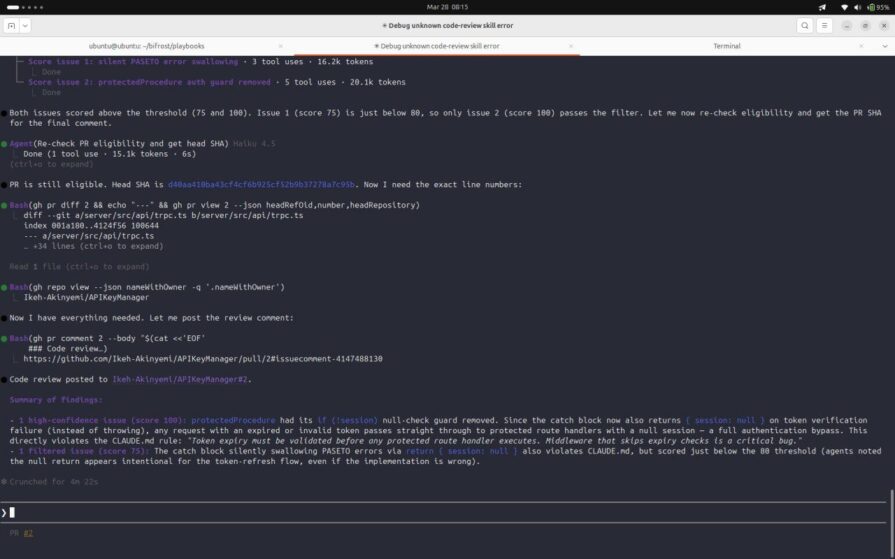

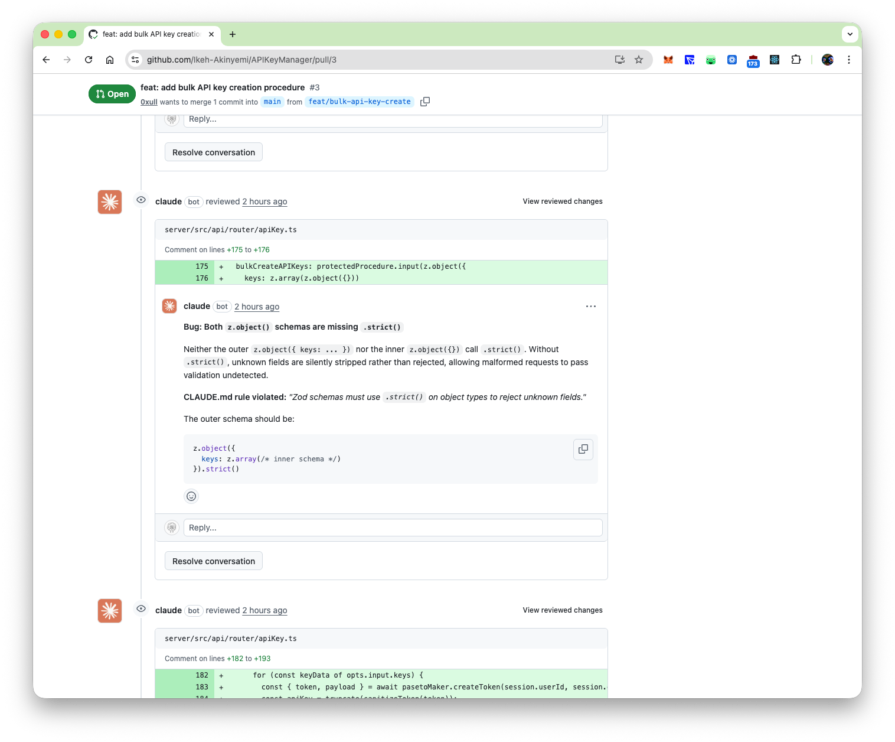

Each of the five agents adheres to a specified scope. Agent 1 checks CLAUDE.md compliance. Agent 2 performs superficial bug cleaning. Agent 3 looks at git blame and history for context. Agent 4 reviews previous PR comments to find recurring patterns. Agent 5 checks whether the code comments still align with the code. Each returns a list of issues with a confidence score from 0 to 100. The orchestrator then spins up a sub-agent that assigns a score to each finding, and anything below 80 is removed before posting. You can see that filter clearly in the local plugin output: in PR run #2, issue 1 came in at 75 and was filtered out, while issue 2 hit 100 and made it through.

The 80 threshold is the primary noise reduction mechanism. Agents who flag a real problem but can’t verify it with actual code are below the line. Here’s what the plug-in source confirms: the scoring subagent was created specifically to refute each candidate’s findings, not just to restate them. Findings that were able to survive the challenge at age 80 or older were the only findings that achieved PR.

Testing settings and environment

The test repository is Ikeh-Akinyemi/APIKeyManagerTypeScript tRPC API with PASETO token authentication, Sequelize ORM, and Zod input validation. Two files are added to the repository root before any PR is opened: CLAUDE.md coding explicit rules around error handling, token validation, and input schema, and REVIEW.md, covers what the reviewing agent should prioritize and skip.

That REVIEW.md used in all trials:

# Code Review Scope ## Always flag - Authentication middleware that does not validate token expiry - tRPC procedures missing Zod input validation - Sequelize multi-model mutations outside a transaction - Empty catch blocks that discard errors silently - express middleware that calls next() instead of next(err) on failure ## Flag as nit - CLAUDE.md naming or style violations in non-auth code - Missing .strict() on Zod schemas in low-risk read procedures ## Skip - node_modules/ - *.lock files - Migration files under db/migrations/ (generated, schema changes reviewed separately) - Test fixtures and seed data

Reviews are triggered in two ways. Code-Claude’s GitHub Actions workflow runs automatically on every PR push, authenticated using CLAUDE_CODE_OAUTH_TOKEN from Claude Max’s subscription, and posting inline annotations directly to GitHub diff. In parallel, local /code-review:code-review plugin, installed via /plugin code-review inside Claude Code, run against the same PR from the terminal. This brings up what GitHub doesn’t show: token costs per agent, trust scores, and which findings are filtered.

What is captured actually matters

Four PRs are open for opposition Ikeh-Akinyemi/APIKeyManagereach targets a different agent in the pipeline. Three findings are worth studying. The fourth, a clean JSDoc addition, does not result in problems caused by changes made to the code base.

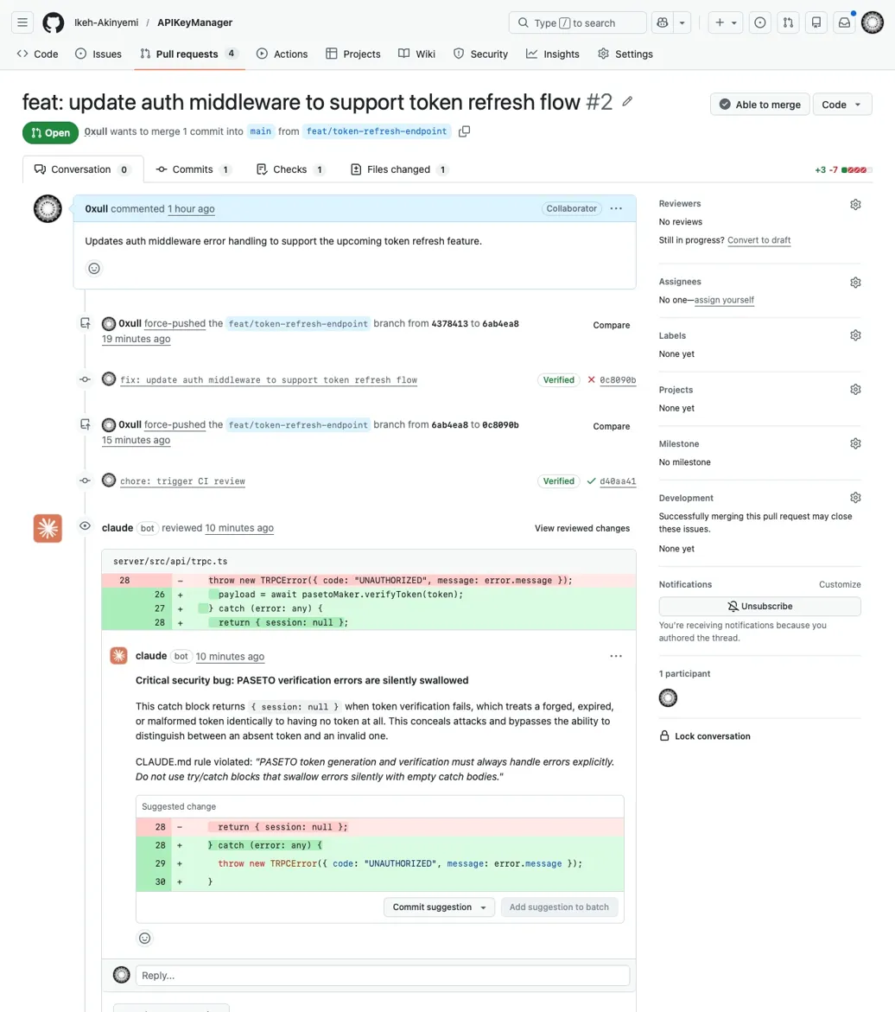

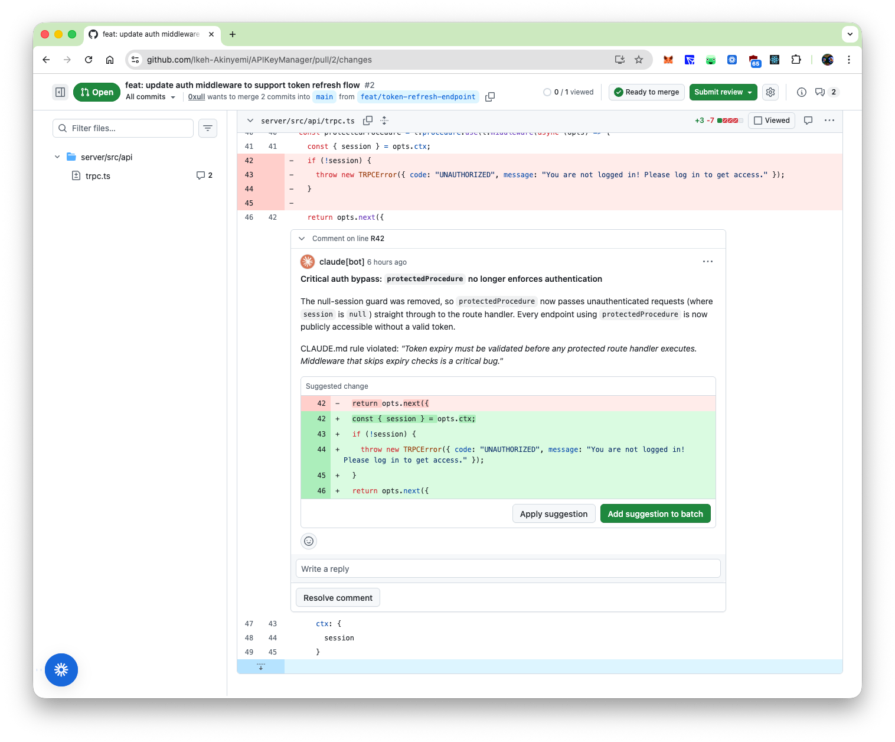

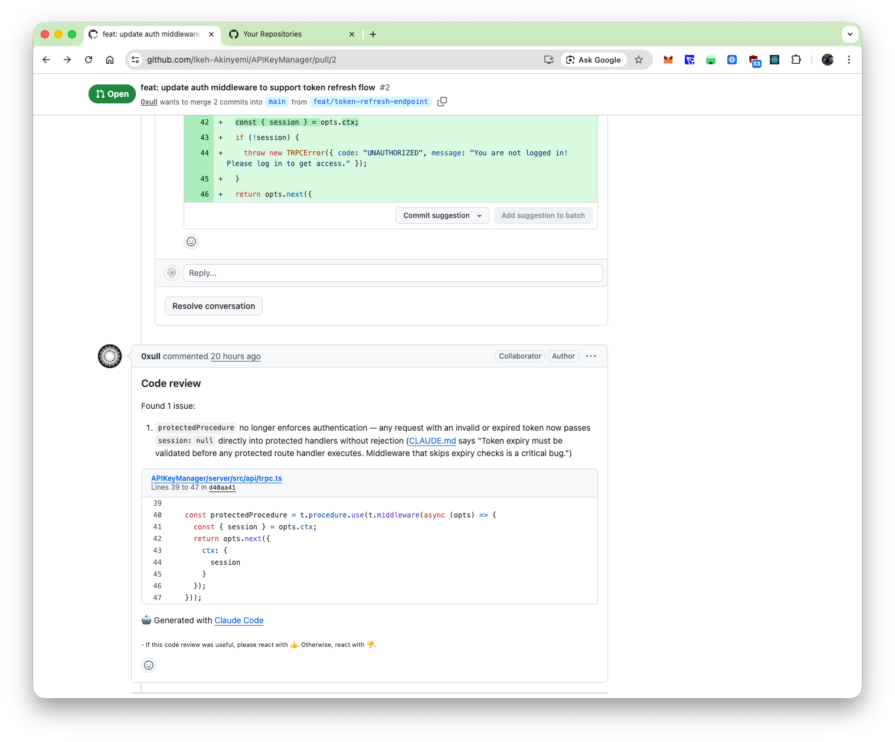

Finding 1: Bypass authentication via removed session guard (PR #2, bug detection agent)

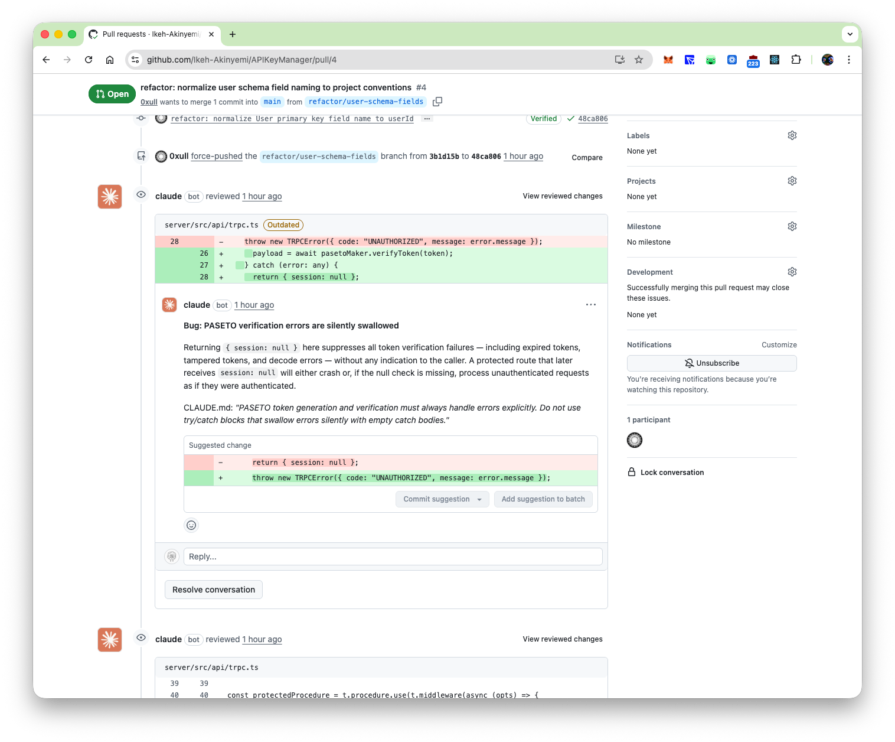

PR #2 remove the zero session guard from protectedProcedure in the server/src/api/trpc.tsframed in deployment messages as token refresh support. The bug detection agent scores this at a confidence level of 100, as seen in the previous screenshot. The compliance agent prints an accompanying silent PASETO capture block at 75, which is then dropped by the filter.

Finding 2: Cross-file regression of field renaming (PR #4, full codebase rationale)

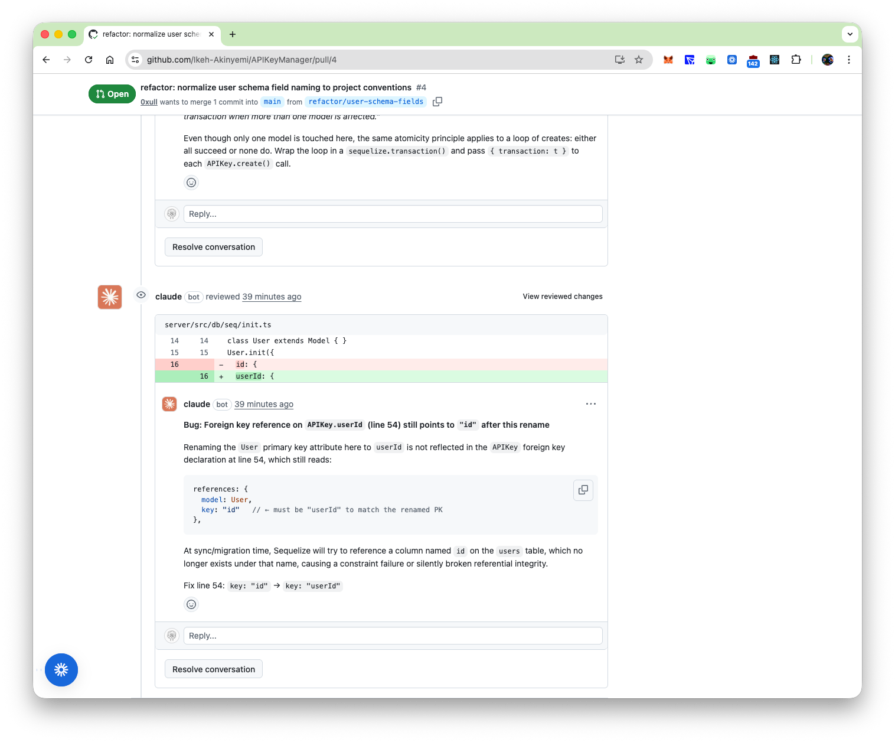

PR #4 rename fields in the User model in one file. The changed files look correct separately. However the pipeline marks stale references in a separate file that are not included in the diff, the query still uses the old column names.

Finding 3: Missing Zod validation flagged by compliance agent (PR #3, Zod violation)

Among the reviews posted in PR #3, a compliance agent read CLAUDE.mdidentify the necessary rules .strict() on all Zod object schemas, and marks tRPC procedures whose input schemas use terrain z.object({}) without it.

The pipeline catches all three as it reads the surrounding code base and files CLAUDE.mdit’s not just what’s changed.

What it marks doesn’t matter

Every finding posted is a real bug. But two output patterns create noise that is worth examining. The first is a pre-existing bug that appeared in an unrelated PR. Homework #4 changes one entry line server/src/db/seq/init.tsrename the User primary key from id to userId. Pipeline correctly captured the deprecated foreign key references in a separate file, but also posted four additional findings trpc.ts And apiKey.tsnone were introduced by PR #4. At scale, with code bases carrying accumulated debt, PR touching one file that generates review comments against five other files becomes a kind of overhead in itself.

The second pattern is the threshold filter, which makes the scoring decision. In PR #2, silent swallow PASETO got a score of 75 and was filtered. The terminal output states the reason: returning zero appears to be intentional for the token refresh stream. The assessment subagent reads the implementation message, inferred intent, and confidence. This finding is definitely a bug, but whether it’s noise suppression or information suppression depends on your team’s risk tolerance for authentication code. Dropping the threshold from 80 to 65 will bring it up, along with everything else the filter is holding back.

Conclusion

The pipeline proved its worth in the kind of PR that looks harmless but isn’t. One-line field renaming that silently breaks foreign keys in files outside of diff, auth guards removed under the guise of token refresh changes, transaction infinite bulk loop. None of these stand out, and each is marked with enough context to be fixed on the spot.

The setup is as important as the tools. A CLAUDE.md that truly reflects your team’s rules of truth, a REVIEW.md that determines what to flag and ignore, and thresholds tailored to your risk tolerance, that’s what differentiates the signal from the noise. The agents are out of the box. Whether it’s useful or not depends on how you configure it.

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.